RAID1 – výměna disku za větší

mdadm –fail /dev/md0 /dev/sda1

mdadm –remove /dev/md0 /dev/sda1

mdadm --manage --stop /dev/md0 That totally removed md0 from the array

Installing mdadm on Linux

The tool that we are going to use to create, assemble, manage, and monitor our software RAID-1 is called mdadm (short for multiple disks admin). On Linux distros such as Fedora, CentOS, RHEL or Arch Linux, mdadm is available by default. On Debian-based distros, mdadm can be installed with aptitude or apt-get.

Fedora, CentOS or RHEL

As mdadm comes pre-installed, all you have to do is to start RAID monitoring service, and configure it to auto-start upon boot:

# systemctl enable mdmonitor

For CentOS/RHEL 6, use these commands instead:

# chkconfig mdmonitor on

Debian, Ubuntu or Linux Mint

On Debian and its derivatives, mdadm can be installed with aptitude or apt-get:

On Ubuntu, you will be asked to configure postfix MTA for sending out email notifications (as part of RAID monitoring). You can skip it for now.

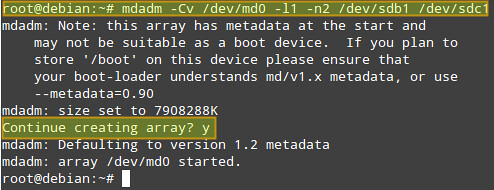

Create a RAID-1 Array

Once you are done with creating the primary partition on each drive, use the following command to create a RAID-1 array:

Where:

- -Cv: creates an array and produce verbose output.

- /dev/md0: is the name of the array.

- -l1 (l as in „level“): indicates that this will be a RAID-1 array.

- -n2: indicates that we will add two partitions to the array, namely /dev/sdb1 and /dev/sdc1.

The above command is equivalent to:

If alternatively you want to add a spare device in order to replace a faulty disk in the future, you can add ‚--spare-devices=1 /dev/sdd1‚ to the above command.

Answer „y“ when prompted if you want to continue creating an array, then press Enter:

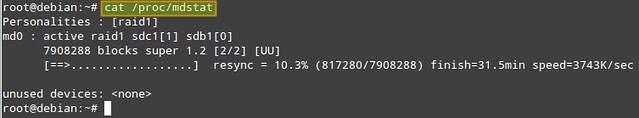

You can check the progress with the following command:

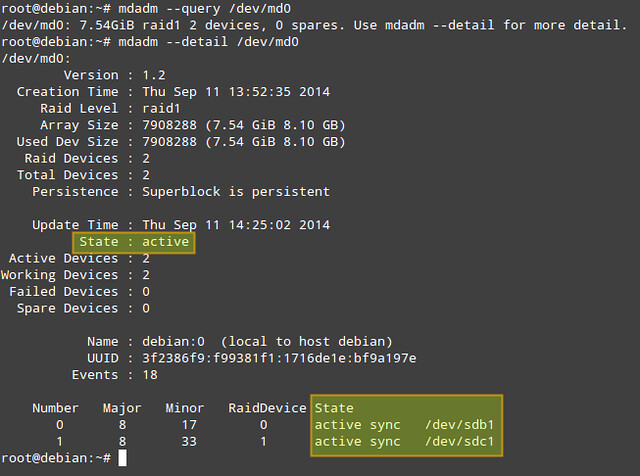

Another way to obtain more information about a RAID array (both while it’s being assembled and after the process is finished) is:

# mdadm –detail /dev/md0 (or mdadm -D /dev/md0)

Of the information provided by ‚mdadm -D‚, perhaps the most useful is that which shows the state of the array. The active state means that there is currently I/O activity happening. Other possible states are clean (all I/O activity has been completed), degraded (one of the devices is faulty or missing), resyncing (the system is recovering from an unclean shutdown such as a power outage), or recovering (a new drive has been added to the array, and data is being copied from the other drive onto it), to name the most common states.

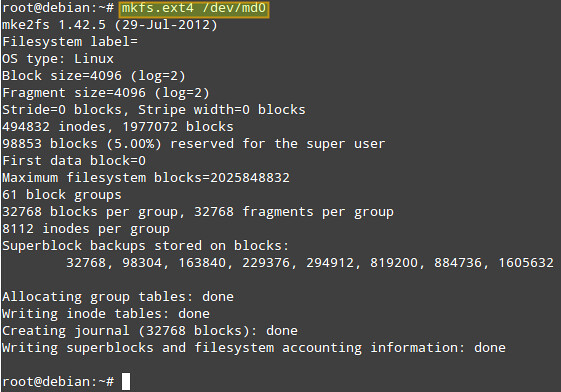

Formatting and Mounting a RAID Array

The next step is formatting (with ext4 in this example) the array:

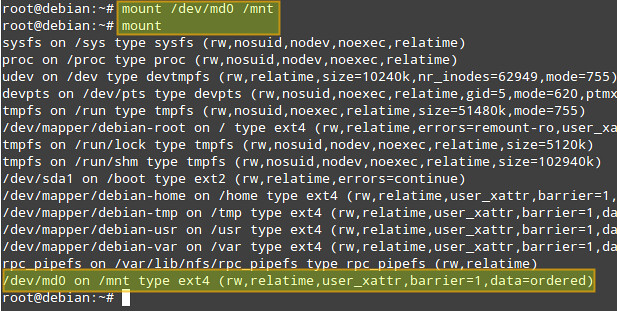

Now let’s mount the array, and verify that it was mounted correctly:

# mount

Monitor a RAID Array

The mdadm tool comes with RAID monitoring capability built in. When mdadm is set to run as a daemon (which is the case with our RAID setup), it periodically polls existing RAID arrays, and reports on any detected events via email notification or syslog logging. Optionally, it can also be configured to invoke contingency commands (e.g., retrying or removing a disk) upon detecting any critical errors.

By default, mdadm scans all existing partitions and MD arrays, and logs any detected event to /var/log/syslog. Alternatively, you can specify devices and RAID arrays to scan in mdadm.conf located in /etc/mdadm/mdadm.conf (Debian-based) or /etc/mdadm.conf (Red Hat-based), in the following format. If mdadm.conf does not exist, create one.

1 2 3 4 5 6 7 8 | DEVICE /dev/sd[bcde]1 /dev/sd[ab]1ARRAY /dev/md0 devices=/dev/sdb1,/dev/sdc1ARRAY /dev/md1 devices=/dev/sdd1,/dev/sde1.....# optional email address to notify eventsMAILADDR your@email.com |

After modifying mdadm configuration, restart mdadm daemon:

On Debian, Ubuntu or Linux Mint:

On Fedora, CentOS/RHEL 7:

On CentOS/RHEL 6:

Auto-mount a RAID Array

Now we will add an entry in the /etc/fstab to mount the array in /mnt automatically during boot (you can specify any other mount point):

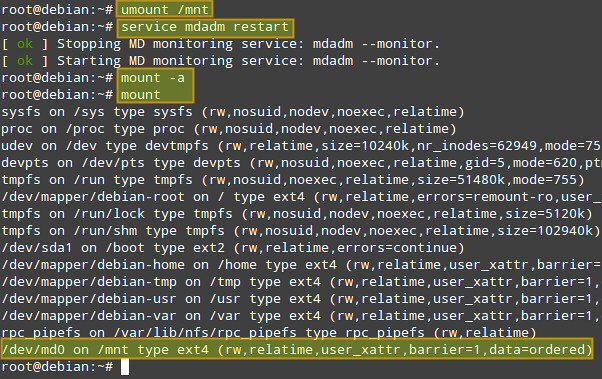

To verify that mount works okay, we now unmount the array, restart mdadm, and remount. We can see that /dev/md0 has been mounted as per the entry we just added to /etc/fstab:

# service mdadm restart (on Debian, Ubuntu or Linux Mint)

or systemctl restart mdmonitor (on Fedora, CentOS/RHEL7)

or service mdmonitor restart (on CentOS/RHEL6)

# mount -a

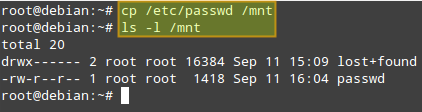

Now we are ready to access the RAID array via /mnt mount point. To test the array, we’ll copy the /etc/passwd file (any other file will do) into /mnt:

On Debian, we need to tell the mdadm daemon to automatically start the RAID array during boot by setting the AUTOSTART variable to true in the /etc/default/mdadm file:

1 | AUTOSTART=true |

Simulating Drive Failures

We will simulate a faulty drive and remove it with the following commands. Note that in a real life scenario, it is not necessary to mark a device as faulty first, as it will already be in that state in case of a failure.

First, unmount the array:

Now, notice how the output of ‚mdadm -D /dev/md0‚ indicates the changes after performing each command below.

# mdadm –remove /dev/md0 /dev/sdb1 #Removes /dev/sdb1 from the array

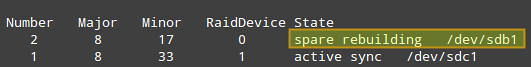

Afterwards, when you have a new drive for replacement, re-add the drive again:

The data is then immediately started to be rebuilt onto /dev/sdb1:

Note that the steps detailed above apply for systems with hot-swappable disks. If you do not have such technology, you will also have to stop a current array, and shutdown your system first in order to replace the part:

# shutdown -h now

Then add the new drive and re-assemble the array:

# mdadm –assemble /dev/md0 /dev/sdb1 /dev/sdc1

Hope this helps.